Welcome & News › Forums › Alumni Discussion Board › AI: Hype, Reality — and Why the Alumni Association Is Getting Involved

- This topic has 6 replies, 3 voices, and was last updated 2 months, 2 weeks ago by

Pete Smith.

Pete Smith.

-

AuthorPosts

-

7th January 2026 at 21:14 #60468

You can’t have failed to notice that AI is the latest, greatest, most-hyped snake oil being offered up by the tech world. Many of us will remember similar enthusiasm around the millennium bug, dot-coms, or more recently every new “G” added to mobile networks. Some of that really did change the world. Some of it quietly disappeared.

AI may yet go either way.

If anyone tells you they know exactly where AI is heading, you can be confident of one thing: they are trying to sell you something. It might transform medicine, food systems, and climate science. Or it might crash and burn under the weight of its own costs and promises. At this point, both outcomes remain highly plausible.

What is clear is that AI isn’t one thing.

A useful way to think about it is in three broad families:

- Predictive AI – the quiet kind that has existed for years, spotting patterns in data and making forecasts or risk assessments in the background.

- Generative AI – the chatty kind (ChatGPT and similar tools) that can draft text, summarise information, or help structure thinking. Impressive, but capable of sounding confident while being wrong if not grounded in real sources.

- Specialist AI – systems that become superhuman at one narrow task, such as chess, maths, logistics optimisation, or specific medical analysis.

Most of what we encounter today sits somewhere in those categories, rather than resembling anything like human intelligence. There is lots of media discussion on that ‘human intelligence’ point from very smart people – start following Professor Hannah Fry on youtube if you want to listen to a serious mathematician with a deep understanding of data and AI. You’ll hear people talking about Artificial General Intelligence (AGI) in this context. If and when we get there, probably the best name for that will be ‘Sir’, or of course ‘Ma’am’.

So what has the Alumni Association done, and why?

Over the past few months, we’ve built a small experimental tool called Eglantyne. It is not chatGPT although it does rest on the same OpenAI technology, and it is certainly not a ‘finished’ solution. In plain English, it’s a research assistant that can search our curated humanitarian news archive and a small number of verified external sources (excluding social media), and then produce summarised, cited answers to questions.

It does not trawl the open internet indiscriminately. It shuns anything anyone has said on social media. It does not invent sources. And it is deliberately constrained to humanitarian topics we care about.

Why does this matter to alumni?

The biggest losses in today’s humanitarian sector relate to the removal of funding and the work not being undertaken. But another serious consequence relates to institutional memory. Field experience fades. Context gets lost. Lessons learned are left to be rediscovered the hard way by the next generation.

Our interest in AI is not about replacing judgement or expertise. It’s about asking a simple question:

Could a tool like this help us preserve, organise, and share what we already know — better than we do today?

Eglantyne is a first, tentative step in that direction. It’s lightweight, imperfect, and occasionally clumsy. In a few years’ time we may look back on it with mild embarrassment — much as parents insist on showing baby photos. But it’s a place to start.

At the end of this post you’ll find:

- a link to a longer explainer for those who want more depth, and

- a link to real example questions and answers generated by the tool.

- A call to action – what YOU can do next to help us not only preserve our institutional memory, but make it accessible ot those who follow.

I’d encourage especially the most sceptical (and you know who you are!) to take a look.

The more interesting question isn’t whether the answers are perfect — they aren’t — but whether they are heading in the right direction. And whether, with alumni contributions, they could become more grounded, more reflective, and more useful.

And that’s the big one. The Call to Action. Our current News Archive is solid for a fairly pathetic three months. It will take years to build up. We’ve gone back in time where we can, but it’s patchy. What would provide much richer content, much stronger answers, would be targeted articles from Alumni. Our institutional knowledge and experience.

AI may turn out to be hype. Or it may become genuinely valuable. Either way, this feels like a bet to nothing: try carefully, learn openly, and see where it leads.

I’ve put into this thread replies covering three further topics (sections will be completed over the next few days) – read as much or as little as you wish!

- A longer explainer of AI if you want to know more depth about ow it works (I suspect that’s just me interested in this)

- A link to a real example Q& A – though you can always just go and try yourself here!

- Some thoughts on the Call to Action – what are we looking for, how might it work?

Comments?

-

This topic was modified 3 months, 3 weeks ago by

Pete Smith.

Pete Smith.

-

This topic was modified 3 months, 2 weeks ago by

Site Admin.

Site Admin.

-

This topic was modified 3 months, 2 weeks ago by

Site Admin.

Site Admin.

-

This topic was modified 3 months, 2 weeks ago by

Site Admin.

Site Admin.

-

This topic was modified 3 months, 2 weeks ago by

Site Admin.

Site Admin.

-

This topic was modified 2 months ago by

Pete Smith.

Pete Smith.

9th January 2026 at 11:52 #60600AI – Magic or Science?

With such a diverse audience as our alumni, it’s always tricky deciding how much detail to put into articles. Here is some further detail if you are interested in what’s behind AI, but by all means skip down to the actual example I’ve put in the next reply.

What actually is AI?

All forms of AI rest on two core elements

- The work of a wondrously obscure 18th century British mathematician Thomas Bayes (1701-1761), who had a profound insight on probability theory giving us what we now call Bayesian stats. His obscurity was hard-earned by publishing exactly zero papers in his lifetime. Impressive for someone who is shaping the 21st century.

- And secondly, AI needs an almighty amount of computing power. Huge data farms that can process in parallel the vast amounts of data required to make Mr Bayes’s sums work. Techs call these computing centres ‘neural networks’, the rest of humanity tend to struggle in disbelief, ‘How much did you say they cost?’

What are Bayesian stats – how different is it to what I did at school ?

It’s a slightly different and many people argue very intuitive way of looking at stats. As so often, it’s best to describe with an example:

Step 1 – I toss a coin twice and it’s heads both times. What are the odds when I toss the coin a third time?

Most people would say ’50:50’ as the coin has no memory of what has occurred before.

Step 2 – I continue the experiment and toss the coin a further 18 times. It’s heads each time. What are the odds when I go to toss the coin a 21st time?

Traditionally, statisticians would say ’50:50’ because the coin still doesn’t have a memory. Quite a few people might though say ‘Hang on, something’s up here, I reckon that coin is fixed.’

And that is the application of Bayesian statistics.

With more data, an eventuality that was so unlikely after 2 coin tosses that the scenario wasn’t even worth a mention becomes the most probable explanation of the events.

Thomas Bayes wrote down a simple formula to calculate this. He seized on what might seem an obvious point – if the coin was fixed after 20 throws, it was obviously fixed after 1, it’s just we had no evidence. So there WAS a probability of the coin being fixed at the start, it’s just that it was perhaps a million to one, and wasn’t worth mentioning. With each toss of the coin though, those million to one odds drop sharply, to the point where after 20 tosses (or before) it’s actually more likely that the coin is fake than a true coin is returning all those heads.

The formula Thomas Bayes worked out to do this is in fact very simple – a lot easier than those various distribution curves you might have suffered with. You’d expect now a 15 or 16 year old to do the calculation as part of their schoolwork fairly easily.

And why does this matter? Because the formula can be put into a computer program, and then with enough data we can move away from the idea that computers are binary ‘yes/no’ to a world where they think in terms of probabilities. And that is so important, as it enables pattern recognition – whether its written words, images or voices.

AI is essentially pattern recognition on steroids. It answers questions not by knowledge, but by finding similar patterns of words in the truly vast amount of text it has access to.

How does that tie to the types of AI?

One day, there might be one thing called AI, though if there is we’ll probably call it AGI (Artificial General Intelligence) if we get there.

Until then, most of what we call AI today falls into three broad buckets:

1) Predictive AI (the quiet kind)

This is the AI that’s been around for years inside many organisations: spotting patterns in data and making predictions. For example, forecasting demand, flagging anomalies, or helping prioritise risk. You often don’t ‘chat’ to it — it runs in the background. Pattern recognition is about probability, which is where our friend Thomas comes in.2) Generative AI (the chatty kind — GPT and friends)

This is what people mean when they say ChatGPT. It can write, summarise, translate, draft emails, and help you think through problems. It’s powerful, but it can also sound confident while being spectacularly wrong if it’s not carefully grounded in real sources. It doesn’t actually ‘understand’ very much at all. It has though read more or less everything humans have ever written (technically that’s called a Large Language Model, or LLM), and is really good at putting together sentences that appear to make sense. It does this by spotting pattens in sentences it’s read compared to what you’ve asked … which is of course just a probability problem involving huge amounts of data. Mr Bayes and those expensive neural networks again.3) Specialist AI (the superhuman-at-one-thing kind)

These are systems designed to excel at a specific task with clear rules and feedback — like chess. That’s why AI chess engines can be astonishingly strong, while a general chat system like ChatGPT will struggle if you ask it to play.But while chess has a fairly narrow set of rules, literally month on month the boundaries are being pushed. Google’s DeepThink is trained to solve mathematical problems. It can now (early 2026) compete at elite IMO (International Mathematical Olympiad) gold-medal level – that’s roughly equivalent to the top 50 18-year-old maths humans on the planet. A year ago, it couldn’t compete with the top 5,000. It’s quite possible that within a year, it will out-perform all humans that have ever lived in Algebra (admittedly, the easiest of the seven major mathematical disciplines, though it didn’t feel like that at school).

Could this form of AI find a cure for cancer? Yes, and many people wish everyone would just focus on that and stop all the rest of the hype.

How do they do this? The answer now should be obvious. It’s Bayesian stats with more computer power than even Turin ever dreamed of. And yes, he did dream of AI – in many senses this belongs to him at least as much as it does to Mr Bayes.

The key takeaway: AI today isn’t one thing. It’s a family of tools. It works by a combination of the application of a clever insight into probability that identities that potential factors change their importance when you consider more data, together with a ridiculous amount of computing power.

-

This reply was modified 3 months, 2 weeks ago by

Site Admin.

Site Admin.

-

This reply was modified 3 months, 2 weeks ago by

Site Admin.

Site Admin.

9th January 2026 at 11:59 #60602Example Question and Response

Below I’ve copied and pasted a ‘model’ question and answer from Eglantyne. It is by no means perfect – the really interesting question is how could we make it better. I’ll address that in the ‘Call to Action’ reply below.

By all means go to Eglantyne on this site and try your own question!

You:

Can you provide a comprehensive analysis comparing the work of Save the Children in Sudan with that of MSF in Gaza these last 2 months. I’d like maybe 2000 words with as much information as you can assess.Eglantyne:

Summary

Over the last two months, Save the Children in Sudan and Médecins Sans Frontières (MSF) in Gaza have been operating under extremely challenging conditions, responding to two of the most severe humanitarian crises globally. Save the Children’s work in Sudan focuses on addressing the consequences of prolonged conflict, with a massive scale of child displacement, acute food insecurity, attacks on healthcare, and a severe education crisis. Their integrated approach includes mobile health clinics, nutrition, child protection, and education services reaching over a million people, including hundreds of thousands of children.

In Gaza, MSF’s operations are critically constrained by Israeli restrictions, including new registration rules threatening their ability to deliver aid. The humanitarian situation is dire, with children facing life-threatening cold amid winter storms, severe hunger, and blocked aid flows. MSF has repeatedly warned of child deaths due to cold and lack of medical supplies, while the blockade and administrative barriers severely limit humanitarian access and response capacity.

Key Findings

-

- Scope and scale of humanitarian response: Save the Children in Sudan has reached over 1.3 million people, including 715,000 children, through a comprehensive package of services such as mobile health clinics (117 teams), nutrition, water, sanitation and hygiene (WASH), child protection, education, and livelihoods support. They have supported over 45,000 children in formal education and 37,000 in non-formal education programs, reflecting a broad multisectoral approach to the crisis [E3][E5].

- In Gaza, MSF’s activities are primarily focused on emergency medical care and addressing acute health needs exacerbated by the blockade and conflict. Their operations include treatment of malnutrition, trauma care, and urgent response to cold-related illnesses among children during winter storms. However, their ability to operate is severely hampered by Israeli-imposed restrictions on NGO registration and aid imports [A1][A2][A4].

-

- Child protection and displacement: Sudan faces one of the world’s largest child displacement crises, with over 6.5 million children uprooted since April 2023, including one child forced to flee every 10 seconds. More than half of the displaced population are children, with approximately 2.8 million under five years old. Save the Children’s work includes protection services for these vulnerable children, addressing risks of violence, exploitation, and family separation [E1][E3].

- In Gaza, children are suffering from the compounded effects of conflict, blockade, and harsh winter conditions. MSF has reported child deaths due to cold exposure amid inadequate shelter and heating, with the blockade severely limiting humanitarian aid deliveries. The protection environment is deteriorating as access to essential services becomes increasingly restricted [A5][A7][A10].

-

- Health challenges and medical access: In Sudan, attacks on healthcare facilities have intensified, with nearly 1,000 people killed in at least 38 incidents targeting hospitals, ambulances, and medical convoys in the first half of 2025—tripling from the previous year. This has severely disrupted health services, including vaccination campaigns. MSF has highlighted a surge in measles cases in Darfur due to delayed vaccine deliveries, exacerbating child morbidity and mortality risks [A3][A9][E2].

- MSF in Gaza faces critical shortages of medical supplies and personnel due to Israeli restrictions. The organization has warned that new registration rules threaten the continuity of their operations, risking the collapse of vital medical services. The blockade has led to severe shortages of medicines and equipment, directly impacting the treatment of malnutrition, trauma, and winter-related illnesses among children [A2][A4][A8].

-

- Food security and nutrition: Sudan’s conflict has disrupted farming and food production, putting nearly half of the country’s children at risk of hunger. Save the Children provides nutrition support alongside health and livelihoods programs to mitigate this crisis. Despite ongoing efforts, malnutrition rates remain high, compounded by displacement and limited access to food [E6][E8].

- In Gaza, Save the Children reports that four out of five children are expected to face catastrophic levels of hunger in 2026. MSF’s medical teams are treating severe malnutrition cases, but the blockade and aid restrictions severely limit food and nutrition assistance. The winter season has further aggravated the situation, increasing vulnerability to illness and death among malnourished children [A6][A5].

-

- Education and psychosocial support: Sudan’s education system is in crisis, with over three-quarters of school-age children out of school due to conflict and displacement. Save the Children supports tens of thousands of children with formal and non-formal education, alongside psychosocial support to address trauma and mental health needs [E7][E5].

- In Gaza, education and psychosocial support are severely disrupted by ongoing conflict and blockade. While MSF’s primary focus is medical, the deteriorating humanitarian conditions contribute to widespread trauma among children, with limited access to protective and educational services [A1][A5].

-

- Logistical and operational challenges: Save the Children in Sudan has managed significant logistics achievements, including delivering 40 metric tonnes of medical supplies in November 2025—the largest NGO consignment since March—enabling sustained support to hundreds of health facilities, including in hard-to-reach areas [E4].

- Conversely, MSF in Gaza is facing existential operational risks due to Israeli-imposed NGO registration rules requiring detailed staff data, which MSF and other agencies warn breach data protection and endanger personnel. This has led to warnings of potential suspension or severe limitation of their humanitarian activities, threatening the delivery of life-saving aid [A2][A4][A8][E9].

-

- Advocacy and humanitarian access: Both organizations have engaged in strong advocacy. Save the Children highlights the scale of child displacement and attacks on healthcare in Sudan, calling for protection of civilians and unimpeded humanitarian access [E1][E2].

- MSF has repeatedly urged Israel to allow critical aid into Gaza, emphasizing the risk of child deaths from cold and hunger due to the blockade and administrative restrictions. They have publicly condemned the new NGO registration rules as a threat to humanitarian principles and the lives of vulnerable populations [A1][A5][A7][A8].

Limitations and uncertainties

- The SCUK Alumni archive provides detailed recent updates on MSF’s challenges in Gaza and some aspects of Sudan’s crisis but lacks direct reporting on Save the Children’s Sudan operations; external sources supplement this gap.

- Data on the precise scale of child mortality and morbidity in both contexts is limited by access constraints and rapidly evolving conditions, particularly in Gaza due to the blockade and in Sudan due to conflict and insecurity.

- The impact of Israeli NGO registration rules on MSF’s Gaza operations is ongoing and subject to change, with potential shifts in operational capacity difficult to predict.

- Sudan’s conflict dynamics and humanitarian access are fluid, with risks of sudden escalations or improvements that could alter the humanitarian landscape and response effectiveness.

Sources in our archive:

- [A1] MSF urges Israel to let critical aid into Gaza as children freeze to death – Al Jazeera (2025-12-20)

- [A2] MSF's vital humanitarian activities in Gaza at risk from Israeli registration rules – MSF – Médecins Sans Frontières (2025-12-22)

- [A3] Measles cases surge in Darfur, Sudan, despite months of calling for vaccination – MSF – Médecins Sans Frontières (2025-12-19)

- [A4] MSF’s vital humanitarian assistance at risk amid Israel’s new INGO registration rules for Gaza – Doctors Without Borders (2025-12-22)

- [A5] MSF Warns of Child Deaths in Gaza Due to Cold, Aid Blockade – ABNA English (2025-12-20)

- [A6] Gaza: Four out of Five Children to Face Catastrophic Levels of Hunger in 2026 – Save the Children US (2025-12-19)

- [A7] MSF says Gaza children are dying from cold, urges Israel to allow humanitarian aid – Egypt Today (2025-12-20)

- [A8] Doctors Without Borders warns Israeli measures threaten aid work in Gaza – سانا (2025-12-22)

- [A9] MSF, UN warn of measles surge in Darfur as vaccines fail to arrive – Sudan Tribune (2025-12-21)

- [A10] MSF: Gaza Children Dying From Cold Amid Winter Storms – – IMEMC News (2025-12-20)

External sources (outside our archive):

- [E1] https://www.savethechildren.org.uk/news/media-centre/press-releases/2025/sudan-one-child-every-10-seconds-has-been-forced-flee-their

- [E2] https://www.savethechildren.org.uk/news/media-centre/press-releases/2025/sudan-sharp-rise-attacks-healthcare-after-two-years-conflict

- [E3] https://www.savethechildren.net/news/sudan-about-7600-children-fleeing-homes-daily-world-s-largest-child-displacement-crisis

- [E4] https://www.savethechildren.net/news/largest-aid-delivery-ngo-march-reaches-sudan-40-tonnes-medicines-and-medical-supplies

- [E5] https://www.savethechildren.net/news/save-children-committed-protecting-children-sudan

- [E6] https://www.savethechildren.net/news/nearly-half-children-sudan-set-go-hungry-conflict-stops-farming

- [E7] https://www.savethechildren.net/news/more-three-quarters-sudans-children-out-school-new-academic-year-begins

- [E8] https://www.savethechildren.net/news/nearly-500-children-die-hunger-sudan-fighting-halts-life-saving-treatment-programmes

- [E9] https://www.theguardian.com/world/2025/dec/30/israel-to-ban-dozens-of-aid-agencies-from-gaza-as-10-nations-warn-about-suffering

Further reading (external sources consulted but not directly quoted):

- [F1] https://savethechildren.org.uk/news/media-centre/press-releases/2024/sudan-hundreds-of-unaccompanied-children-among-thousands-fleeing-latest-surge-of-fighting-

- [F2] https://savethechildren.net/news/sudan-one-child-every-10-seconds-forced-flee-their-home-conflict-began-two-years-ago

- [F3] https://savethechildren.net/news/over-28-million-children-under-five-forced-their-home-across-sudan

- [F4] https://savethechildren.net/news/sudan-over-1500-children-subjected-extreme-violence-conflict-breaks-records-crimes-against

- [F5] https://unicef.org/sudan/press-releases/gavi-save-children-and-unicef-collaborate-strengthen-immunization-services-sudan

-

This reply was modified 3 months, 2 weeks ago by

Site Admin.

Site Admin.

-

This reply was modified 1 month, 3 weeks ago by

Pete Smith.

Pete Smith.

14th January 2026 at 14:02 #61994A Call to Action: turn your experience into a living knowledge base

Many alumni carry lessons that never made it into a formal report: the workaround that kept a programme running, the early warning signal everyone missed, the staff-security choice that felt impossible at the time, the coordination habit that quietly saved weeks.

The problem is obvious: when people move on, that institutional knowledge goes with them. And in our world, that’s more than just a shame.

So the idea is to extend our AI-based research assistant Eglantyne to include a new, high-value source type: Practitioner Experience Notes, written by alumni. These won’t be news and they won’t be generic theory. They’ll be first-hand, field-smart reflections — indexed, searchable, and (when relevant) prioritised in answers. Answers that we will make available through our AI Research Assistant tool to anyone that asks; we want to share knowledge, not keep it to ourselves.

This is a genuine ‘many small contributions become something powerful’ project. If even a fraction of our alumni write a few pieces, we can build a resource that helps colleagues for years.

What we’re asking you to write

A Practitioner Experience Note is a short article based on your experience. Think: ‘Here’s what happened, here’s what we did, here’s what I wish someone had told me beforehand.’

We’re looking for notes on topics like this – not pretending this list is in any way exhaustive:

- Early warning & famine: signals, thresholds, politics of declaring, data quality, community insights vs dashboards

Example: Early warning systems for famine in Africa (1970s–80s): what we got right, what we didn’t. - Negotiation & access: working with authorities, non-state actors, red lines, humanitarian principles in practice

- Working with communities: feedback mechanisms, safeguarding, trust rebuilding after harm, accountability under stress

- Coordination realities: cluster meetings, duplication, info-sharing culture, how to make coordination useful rather than performative

- Staff security: decision-making under pressure; travel/compound rules; local partner risk; kidnaps/armed groups; comms blackouts

Example: Staff security decisions during the Rwanda crisis: what actually worked. - Supply chain & logistics: last-mile delivery, customs, cold chain, local procurement pitfalls

- Programme quality: rapid assessments, MEAL under constraints, adapting interventions mid-response

- Leadership & teams: managing mixed teams, burnout, duty of care, hard personnel calls

- Advocacy & media: speaking up safely, comms during conflict, protecting staff/partners while influencing change

If you’re not sure whether your idea ‘counts’, it probably does.

Suggested length

To make this easy to contribute to and easy to use:

- Ideal length: 800–1,500 words (this size is the ‘sweet spot’ for current AI technology behind the scenes)

- Short is fine: 400–800 words (‘one lesson, one story’)

- Longer is welcome when needed: up to 2,500 words if it genuinely benefits from depth

Some of you might already have even longer articles, or feel that the topics involved are so complex they require long articles. That’s fine. Please discuss with me and we will figure a way.

This is not meant to be academic. Clarity beats polish.

A simple recommended structure (so it’s easy to read and search)

You can follow this template loosely if you wish, but don’t feel constrained:

- Title (clear and specific)

- Where/when (country/region + approximate dates or period)

- Context in 5 lines (what was happening; what your role was)

- The problem (what made it hard/urgent/uncertain)

- What we did (decisions, actions, approaches)

- What worked / what didn’t (be honest — that’s the value)

- Lessons learned (3–7 bullet points is perfect)

- Watch-outs (risks, unintended consequences, red flags)

- If I had to do it again… (practical advice)

- Keywords (10–20 terms you’d want someone to search)

If you prefer a narrative style, that’s fine too — the key is that it ends with practical takeaways.

Sensible boundaries (important)

We want this to be useful and safe so the obvious guidelines given we are sharing this openly:

- No names or identifying details of individuals at risk (staff, partners, community members)

- Avoid operational specifics that could compromise security (routes, timings, sensitive methods, etc.)

- If you’re unsure, write it as if it might be widely read and we’ll help with light redaction if needed

- You can include ‘what happened’ without revealing ‘how to replicate it’

How it will work (high level)

We’ll provide a simple submission form where you can:

- select canonical country/countries and themes

- add start/end dates (even approximate)

- add keywords

- upload your article (Word/PDF — whatever’s easiest)

On our side, the system will index these as Practitioner Experience Notes. When someone asks Eglantyne a question, these notes will be treated as high-weight sources — not because they’re ‘more true’ than everything else, but because first-hand field learning is often the missing ingredient in generic summaries.

Also: the number of Practitioner Experience Notes pulled into any one answer will be small — often 1–5, rarely more — so each note can genuinely matter.

Your article will appear in ‘public domain’ searches where appropriate as a cited source. Because of GDPR, authorship will be defined as follows:

- If the search is being made by alumni, your name can be displayed, or you can stay anonymous if you wish.

- If the search is being made by public, authorship will be ‘Save the Children Alumni Association’. If we don’t do that and published names, we would need to go into a much more formal tier of legal compliance that we want to avoid if possible.

What to write first (if you want a prompt)

If you’d like a starting point, pick one:

- A decision I got right (and why).

- A decision I got wrong (and what I learned).

- The early warning sign we missed.

- The one coordination habit that changed everything.

- A security rule that sounded bureaucratic until it wasn’t.

- How to work effectively with [X] stakeholder in [Y] context.

- Three things I wish new arrivals knew in week one.

FAQs

- I worked for Save the Children for 10 years – how many Practitioner Notes can I write? As many as you like.

- I was working for another NGO when I learned some really important lessons. Can I write about that experience? Yes, but please use discretion and make explicitly clear this was not a Save the Children programme

- Is this field work only? I specialised in Grant funding in Finance and think I learned a lot that would be useful to pass on. Yes, we are expecting the majority of articles to be field based, but there are no restrictions at all. Please write and submit.

If you’re in: reply ‘Yes – I’ll write one/some (and a rough topic. Don’t worry if someone else is writing a similar topic; the similarities and differences will be really valuable information.)

And if you’d rather talk it through before writing: also fine.

— Pete

-

This reply was modified 1 month, 3 weeks ago by

Pete Smith.

Pete Smith.

21st January 2026 at 10:34 #63018Finally, if you are already into AI and have found the above explanations straightforward, here is a good article that explains technically what we’ve done ….

Self-RAG: When AI Questions Its Own Answers

Edition #243 | 21 January 2025

Jan 21, 2026Hello!

Welcome to today’s edition of Business Analytics Review!Today’s edition dives deeper into one of the most promising advancements in making AI truly dependable: Self-RAG: When AI Questions Its Own Answers. We’ll unpack this framework in greater detail, exploring its mechanics, real advantages, practical examples from industry settings, and why it’s becoming essential for analytics-driven decisions. As always, I’ll keep things conversational, blend in some technical depth with relatable insights, and guide you through it step by step.

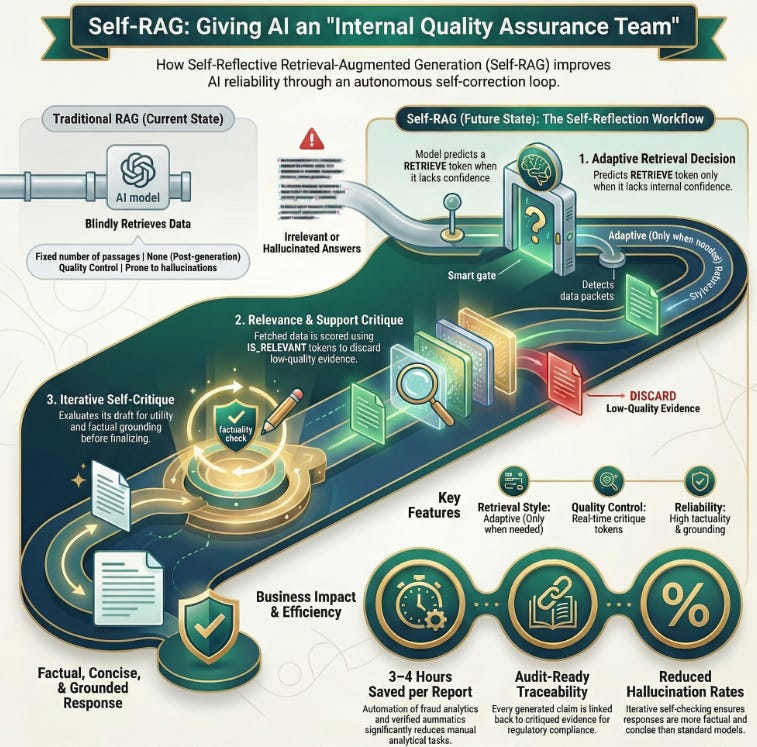

Understanding Self-RAG: The Core Idea and Evolution from Traditional RAG

Self-RAG, short for Self-Reflective Retrieval-Augmented Generation, builds directly on the classic Retrieval-Augmented Generation (RAG) approach. Traditional RAG pulls relevant documents from an external knowledge base every time a query comes in, then feeds them to the language model to generate a grounded response. This reduces hallucinations compared to pure LLMs, but it has limitations: it always retrieves a fixed number of passages (even when unnecessary), sometimes grabs irrelevant info, and doesn’t critically evaluate what it retrieves or generates.

Self-RAG, introduced in the 2023 paper by Akari Asai and team (”Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection”), flips this script. It trains a single language model to adaptively decide whether retrieval is needed at all, reflect on retrieved content for relevance and support, and critique its own draft outputs before finalizing. The model uses special reflection tokens like RETRIEVE, IS_RELEVANT, SUPPORTS, or IS_USEFUL to signal these decisions during generation.

Think of it as giving the AI an internal quality assurance team: it pauses mid-thought to ask, “Do I really need fresh data here?” or “Does this fact check out against what I just pulled?” This self-reflection loop makes responses more factual, concise, and tailored especially valuable in fast-moving fields like business analytics where outdated or unverified insights can lead to poor decisions.

Breaking Down the Self-RAG Workflow: Step-by-Step Mechanics

To make this concrete, here’s how Self-RAG typically operates in practice:

- Adaptive Retrieval Decision — For each segment (often sentence-level) of the response, the model predicts a reflection token like RETRIEVE. If the query is something the model knows confidently (e.g., basic definitions), it skips retrieval to save compute and time. If uncertainty arises, it triggers a search.

- Retrieval and Relevance Critique — Once passages are fetched, the model generates tokens like IS_RELEVANT or IS_SUPPORTED to score them. Irrelevant chunks get discarded, ensuring only high-quality evidence influences the output.

- Generation with Self-Critique — The model drafts candidate responses and uses critique tokens (e.g., SUPPORTS for factual grounding, USEFUL for overall utility) to evaluate them. It can even regenerate or select the best candidate iteratively until satisfied.

- Final Output Selection — The highest-scoring, most grounded segment wins. This creates a feedback mechanism where the model learns to be more precise over time.

In experiments from the original paper, Self-RAG outperformed standard RAG and even strong models like retrieval-augmented ChatGPT on tasks involving reasoning, fact verification, and long-form generation. It achieved higher factuality (fewer unsupported claims) while being more efficient no blind over-retrieval.

Real-World Impact and Industry Examples in Business Analytics

Why does this matter for analytics professionals and businesses? Self-RAG shines in scenarios demanding high accuracy and up-to-date knowledge without constant manual intervention.

Consider a financial analytics team forecasting trends: In a volatile market, the AI might retrieve recent SEC filings or news only when needed, then self-check if the data supports claims about revenue shifts. This reduces risky hallucinations in reports.

In retail or e-commerce, Self-RAG-powered tools can analyze customer behavior data alongside current market reports. One reported benefit in similar advanced RAG setups (like those at companies automating fraud analytics) is saving hours per report by generating accurate summaries with verified sources imagine cutting 3-4 hours off routine analytical tasks.

For enterprise knowledge assistants handling proprietary data, Self-RAG enables offline or edge-device use while maintaining reliability in regulated sectors like finance or healthcare. It supports audit-ready traceability: every claim links back to critiqued evidence.

Anecdotally, teams building internal chatbots find Self-RAG reduces follow-up questions from users because answers feel more trustworthy and complete. In one case inspired by industry patterns, a marketing analytics dashboard using reflective mechanisms spotted emerging consumer shifts faster, leading to timely campaign adjustments and measurable ROI gains.

Overall, Self-RAG delivers measurable improvements: better factual accuracy, lower hallucination rates, cost savings from efficient retrieval, and stronger alignment with compliance needs in 2026’s AI landscape.

Recommended Reads

- A comprehensive guide with step-by-step LangGraph implementation, showing how Self-RAG adds iterative reasoning and self-evaluation to traditional RAG pipelines. Check it out

- Explores Self-RAG mechanisms like reflection tokens, adaptive retrieval, and candidate critique, with practical LangChain examples and comparisons to standard approaches. Check it out

- Breaks down Self-RAG as an intelligent system that knows when to double-check, with details on on-demand retrieval, self-reflection, and advantages in factual accuracy for real applications. Check it out

11th February 2026 at 18:45 #66487Pete, this is some of the most helpful stuff on AI that I’ve read; thank you!

11th February 2026 at 21:52 #66504Pete, this is some of the most helpful stuff on AI that I’ve read; thank you!

Thanks. I always recommend Professor Hannah Fry as a great source if people want to know more. Easy to find her materials on Radio 4 and youtube.

I’m spending evenings teaching SCAA-GPT to find relevant PENs – hope to have something I can put in the newsletter by the end of the month.

-

AuthorPosts

- You must be logged in to reply to this topic.