Welcome & News › Forums › Alumni Discussion Board › AI: Hype, Reality — and Why the Alumni Association Is Getting Involved › Reply To: AI: Hype, Reality — and Why the Alumni Association Is Getting Involved

Finally, if you are already into AI and have found the above explanations straightforward, here is a good article that explains technically what we’ve done ….

Self-RAG: When AI Questions Its Own Answers

Edition #243 | 21 January 2025

Hello!

Welcome to today’s edition of Business Analytics Review!

Today’s edition dives deeper into one of the most promising advancements in making AI truly dependable: Self-RAG: When AI Questions Its Own Answers. We’ll unpack this framework in greater detail, exploring its mechanics, real advantages, practical examples from industry settings, and why it’s becoming essential for analytics-driven decisions. As always, I’ll keep things conversational, blend in some technical depth with relatable insights, and guide you through it step by step.

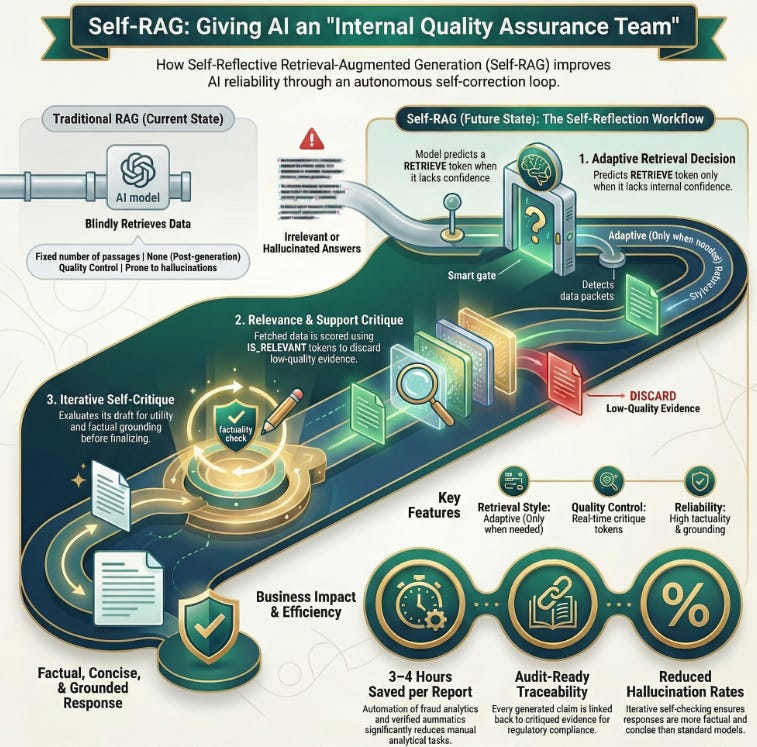

Understanding Self-RAG: The Core Idea and Evolution from Traditional RAG

Self-RAG, short for Self-Reflective Retrieval-Augmented Generation, builds directly on the classic Retrieval-Augmented Generation (RAG) approach. Traditional RAG pulls relevant documents from an external knowledge base every time a query comes in, then feeds them to the language model to generate a grounded response. This reduces hallucinations compared to pure LLMs, but it has limitations: it always retrieves a fixed number of passages (even when unnecessary), sometimes grabs irrelevant info, and doesn’t critically evaluate what it retrieves or generates.

Self-RAG, introduced in the 2023 paper by Akari Asai and team (”Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection”), flips this script. It trains a single language model to adaptively decide whether retrieval is needed at all, reflect on retrieved content for relevance and support, and critique its own draft outputs before finalizing. The model uses special reflection tokens like RETRIEVE, IS_RELEVANT, SUPPORTS, or IS_USEFUL to signal these decisions during generation.

Think of it as giving the AI an internal quality assurance team: it pauses mid-thought to ask, “Do I really need fresh data here?” or “Does this fact check out against what I just pulled?” This self-reflection loop makes responses more factual, concise, and tailored especially valuable in fast-moving fields like business analytics where outdated or unverified insights can lead to poor decisions.

Breaking Down the Self-RAG Workflow: Step-by-Step Mechanics

To make this concrete, here’s how Self-RAG typically operates in practice:

- Adaptive Retrieval Decision — For each segment (often sentence-level) of the response, the model predicts a reflection token like RETRIEVE. If the query is something the model knows confidently (e.g., basic definitions), it skips retrieval to save compute and time. If uncertainty arises, it triggers a search.

- Retrieval and Relevance Critique — Once passages are fetched, the model generates tokens like IS_RELEVANT or IS_SUPPORTED to score them. Irrelevant chunks get discarded, ensuring only high-quality evidence influences the output.

- Generation with Self-Critique — The model drafts candidate responses and uses critique tokens (e.g., SUPPORTS for factual grounding, USEFUL for overall utility) to evaluate them. It can even regenerate or select the best candidate iteratively until satisfied.

- Final Output Selection — The highest-scoring, most grounded segment wins. This creates a feedback mechanism where the model learns to be more precise over time.

In experiments from the original paper, Self-RAG outperformed standard RAG and even strong models like retrieval-augmented ChatGPT on tasks involving reasoning, fact verification, and long-form generation. It achieved higher factuality (fewer unsupported claims) while being more efficient no blind over-retrieval.

Real-World Impact and Industry Examples in Business Analytics

Why does this matter for analytics professionals and businesses? Self-RAG shines in scenarios demanding high accuracy and up-to-date knowledge without constant manual intervention.

Consider a financial analytics team forecasting trends: In a volatile market, the AI might retrieve recent SEC filings or news only when needed, then self-check if the data supports claims about revenue shifts. This reduces risky hallucinations in reports.

In retail or e-commerce, Self-RAG-powered tools can analyze customer behavior data alongside current market reports. One reported benefit in similar advanced RAG setups (like those at companies automating fraud analytics) is saving hours per report by generating accurate summaries with verified sources imagine cutting 3-4 hours off routine analytical tasks.

For enterprise knowledge assistants handling proprietary data, Self-RAG enables offline or edge-device use while maintaining reliability in regulated sectors like finance or healthcare. It supports audit-ready traceability: every claim links back to critiqued evidence.

Anecdotally, teams building internal chatbots find Self-RAG reduces follow-up questions from users because answers feel more trustworthy and complete. In one case inspired by industry patterns, a marketing analytics dashboard using reflective mechanisms spotted emerging consumer shifts faster, leading to timely campaign adjustments and measurable ROI gains.

Overall, Self-RAG delivers measurable improvements: better factual accuracy, lower hallucination rates, cost savings from efficient retrieval, and stronger alignment with compliance needs in 2026’s AI landscape.

Recommended Reads

- A comprehensive guide with step-by-step LangGraph implementation, showing how Self-RAG adds iterative reasoning and self-evaluation to traditional RAG pipelines. Check it out

- Explores Self-RAG mechanisms like reflection tokens, adaptive retrieval, and candidate critique, with practical LangChain examples and comparisons to standard approaches. Check it out

- Breaks down Self-RAG as an intelligent system that knows when to double-check, with details on on-demand retrieval, self-reflection, and advantages in factual accuracy for real applications. Check it out